- Blog

- Blog

- Transformers animated season 1 episode 10

- Dalai lama quotes guns

- Modded games on android

- Saint seiya soul of gold episode 1

- Transcribe a youtube video

- Avie my pretty avatar hacked

- Bartender 10-1 ultralite

- Friezas first form z soul

- Fps games free

- Remover malware e spyware compativel com windows 10

- Derivative of log n

- Serialization bartender 10-1

- Troubleshooting electrical circuits v4 crack

- Gangstar vegas game apk download

- Truck chip tuning

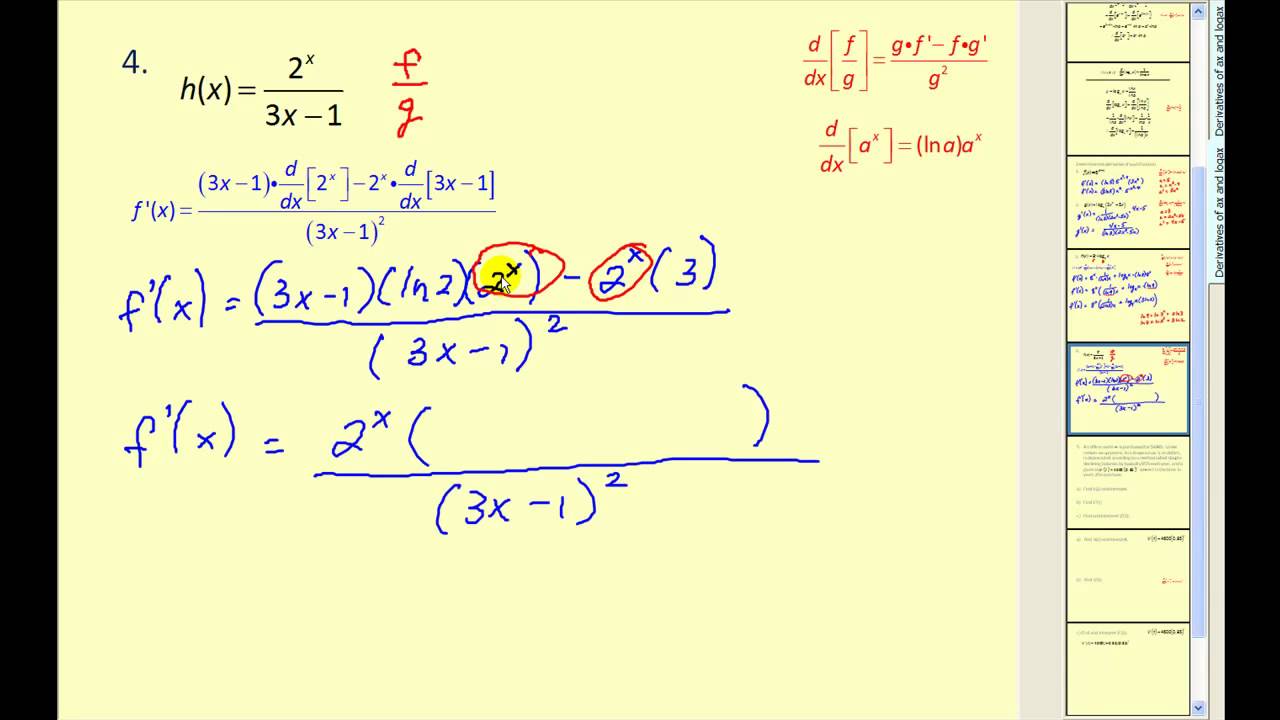

For any, the elements of which affect are those which do not lie on row or column. This means that the first term above reduces to. On the other hand, by the cofactor expansion of the determinant,, so by the product rule, These terms are useful because they related to both matrix determinants and inverses. The adjugate matrix of, denoted, is simply the transpose of.The cofactor matrix of, denoted, is an matrix such that.the minor of, denoted, is the determinant of the matrix that remains after removing the th row and th column from.(An alternate proof is given in Section A.4.1 of Steven Boyd’s Convex Optimization.)īefore we get there, we need to define some other terms. (Here, we restrict the domain of the function to with positive determinant.) The most straightforward proof I know of this is direct computation: showing that the th entry on the LHS is equal to that on the RHS. As the logarithmic function with base, and exponential function with the same base form a pair of mutually inverse functions, the derivative of the logarithmic. This derivative will overtake the derivative of log n, which is c /n. It is read as the derivative of a raised to the power of x with respect to x is equal to the product of a x and ln. įor some functions, the derivative has a nice form. 14.5.13 Derivative Rule A sufficient condition for f O(g), where f and g are. The differentiation of exponential function with respect to a variable is equal to the product of exponential function and natural logarithm of base of exponential function. For a function, define its derivative as an matrix where the entry in row and column is. So how do we know which estimator we should use for \(\sigma^2\) ? Well, one way is to choose the estimator that is "unbiased." Let's go learn about unbiased estimators now.Let be a square matrix. Note that the maximum likelihood estimator of \(\sigma^2\) for the normal model is not the sample variance \(S^2\). Then, the joint probability mass (or density) function of \(X_1, X_2, \cdots, X_n\), which we'll (not so arbitrarily) call \(L(\theta)\) is: In the previous posts we covered the basic derivative rules, trigonometric functions, logarithms and exponents.

But how would we implement the method in practice? Well, suppose we have a random sample \(X_1, X_2, \cdots, X_n\) for which the probability density (or mass) function of each \(X_i\) is \(f(x_i \theta)\). It is particularly useful for functions where a variable is raised to a variable power and to differentiate the logarithm of a function rather than the function itself. (So, do you see from where the name "maximum likelihood" comes?) So, that is, in a nutshell, the idea behind the method of maximum likelihood estimation. Logarithmic differentiation is a method used to differentiate functions by employing the logarithmic derivative of a function.

It seems reasonable that a good estimate of the unknown parameter \(\theta\) would be the value of \(\theta\) that maximizes the probability, errrr.